In connection with the launch of the Horizon Workspace 1.8 (the need to perform an upgrade), and start-up testing of our new solution based in part on the Workspace, I decided to completely remodel the environment in which it operates. At first she went database. For some time, we are owners of VMware vFabric components, including vPostgres, of which we are very happy (because who would not be happy with owned production support for PostgreSQL database). In the prepared solution, I used three installations of vPostgres Appliance 9.3.2, two instances operating mode is the main and replica (as a final backup), and a third-party acting actively. Nodes operating in activ/activ mode, are replicated by pgPool-II (installed separately on CentOS). In such a scheme pgPool-II is a SPOF, so it is best to prepare two systems and use the load balancer (in our case, on the production is BIG-IP F5). Or use two installations pgPool-II enabled Watchdog and floating between machines “virtual” IP address (this is the installation in LAB). I describe the solution is the most versatile, can be successfully used to run a cluster of PostgreSQL for vCloud Automation Center (and probably in the future to vCSA 6.0 which will support an external PostgreSQL database).

First, we have to prepare all the vPostgres servers so properly work with Horizon Workspace.

In the file /var/vmware/vpostgres/current/pgdata/postgresql.conf set two variables :

max_connections = 600

search_path = 'saas'

Then restart (as root) vPostgres command: service vpostgres_mon stop / start.

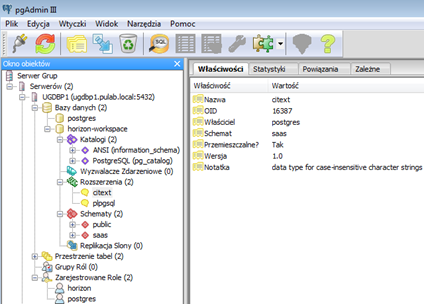

In the next step, configure a main server and replica. We assume a database can have any name, not necessarily saas (the same applies to the owner of the database). It is important to create a scheme called saas, and load extension “citext” showing saas scheme, as in the figure below (without them Workspace will report database errors):

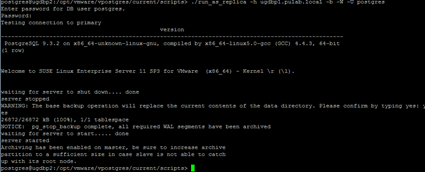

We have prepared the database, next go to the second server, which will be a replica, to the directory: /opt/vmware/vpostgres/current /scripts and execute the following command (as user postgres):

./run_as_replica -h IP_MASTER -b -W -U postgres

The script initiates the conversion of all the settings on both vPostgres servers. Has been completed we will have a properly functioning Master-> Slave.

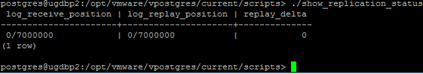

We can check replication status: show_replication_status:

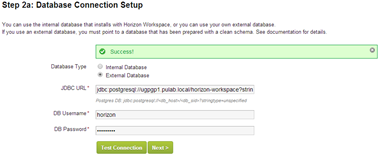

After any failure, We need to execute a script promote_replica_to_primary on slave vPostgres. If you stop at this configuration, after promote the new master server, we need to change the path of the JDBC Connector Horizon Workspace manually:

We have done the initial setup, install and configure now PG_POOL . I have chosen as the basis for installation of CentOS Linux distribution, for many reasons, is free and supported many solutions related to vSphere (such as Trend Micro Deep Security). Unfortunately, in the 64-bit version is not pgPool-II package, you must download and install it manually. Of course you can install it directly in the Appliance vPostgres, but here it must be remembered, that this is not the “standard” distribution, and can cause us problems at the next upgrade (if the mess). Besides, using LoadBalancer, it is easier to generate another pgPool-II node, than the next vPostgres instance.

Configuration pgPool-II is not difficult, all the files are in / etc / pgPool-II. In the file pgpool.conf set the following options:

listen_adress = ‘*’ <— listen ot each interface

port = 5432 <— the default 9999, but it is only when pgpool is installed with PostgreSQL

backend_hostname0 = ‘ugdbp1.pulab.local’ <— first node name

backend_port0 = 5432 <—database port

backend_weight0 = 1 <—priority

backend_hostname1 = ‘ugdbp2.pulab.local’ <—secondary node name

backend_port1 = 5432

backend_weight1 = 1 <— priority, 1 and 1 = 0.5

enable_pool_hba = on <— connecting to pgpool from outside (not only localhost)

pool_passwd = ‘pool_passwd’ <— password file for all users operate on postgres

num_init_children = 300

max_pool = 2 <— connection limit 600=300x2

replication_mode = on <— synchronous write to nodes

load_balance_mode = on <— asynchronous read from nodes

Watchdog configuration is as follows:

use_watchdog = on

trusted_servers = '192.168.60.1' <— gateway for network testing

ping_path = '/bin'

wd_hostname = '192.168.60.134' <— local node name (string)

wd_port = 9000

wd_authkey = ''

delegate_IP = '192.168.60.136' <-- floating IP address

ifconfig_path = '/sbin'

if_up_cmd = 'ifconfig eth1:0 inet $_IP_$ netmask 255.255.255.0'

if_down_cmd = 'ifconfig eth1:0 down'

arping_path = '/usr/sbin'

arping_cmd = 'arping -U $_IP_$ -w 1'

clear_memqcache_on_escalation = on

wd_escalation_command = ''

wd_lifecheck_method = 'heartbeat'

wd_interval = 10

wd_heartbeat_port = 9694

wd_heartbeat_keepalive = 2

wd_heartbeat_deadtime = 30

heartbeat_destination0 = '192.168.60.135' <— secondary watchdog name

heartbeat_destination_port0 = 9694

heartbeat_device0 = 'eth1'

wd_life_point = 3

wd_lifecheck_query = 'SELECT 1'

wd_lifecheck_dbname = 'template1'

wd_lifecheck_user = 'nobody'

wd_lifecheck_password = ''

other_pgpool_hostname0 = '192.168.60.135' <— secondary pgpool node name

other_pgpool_port0 = 5432

other_wd_port0 = 9000

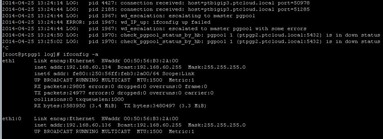

On the second server configuration is exactly the opposite (IP addresses). If everything works correctly, you can easily disable individual servers pgPool-II and address the “virtual” will be properly transferred between machines:

In the file pool_hba.conf set the permitted methods of connection, apply exactly the same principle as in PostgreSQL (we can set host all all 0.0.0.0 / 0 md5). In the file pool_passwd have to enter all users of the database who will connect to PostgreSQL via pgPool-II in the diagram USER: MD5HASH. Important note, in the documentation We see that hash can be generated with command pg_md5, error, the command generates an incorrect hash. Valid be drawn directly from the database (if you use pgAdmin is enough to click on a role). However, if we want to use pgpoolAdmin, in the file pcp.conf saved passwords must be generated by pg_md5. And that’s basically all, pgPool-II is completely transparent, does not generate load on the machine, a super solution.

Finally, a few words about the failure. For Master-> Slave scheme is known, if the master fail, the other node is promoted to the role of master and We switch the application on the server. After removal of the accident, just strapped recovered server as a slave. If we have two active Nodes, the failure of one does not affect the application, pgPool-II simply close the connection on fail node and all requests forward to the second. After removal of the accident, absolutely we can not just run of the recovered server. Turn off the application and made cohesive database (connect as slave, everything will copy itself, and promote the server), and after that, turn on the application. The difference apparently comes down to one, in the first variant, we need to disable the application when we have, and the second, when we wants! ![]()

Was this information is helpful? Tell me, please leave a comment!